Social Network

Forum

Featured themes

Contribute

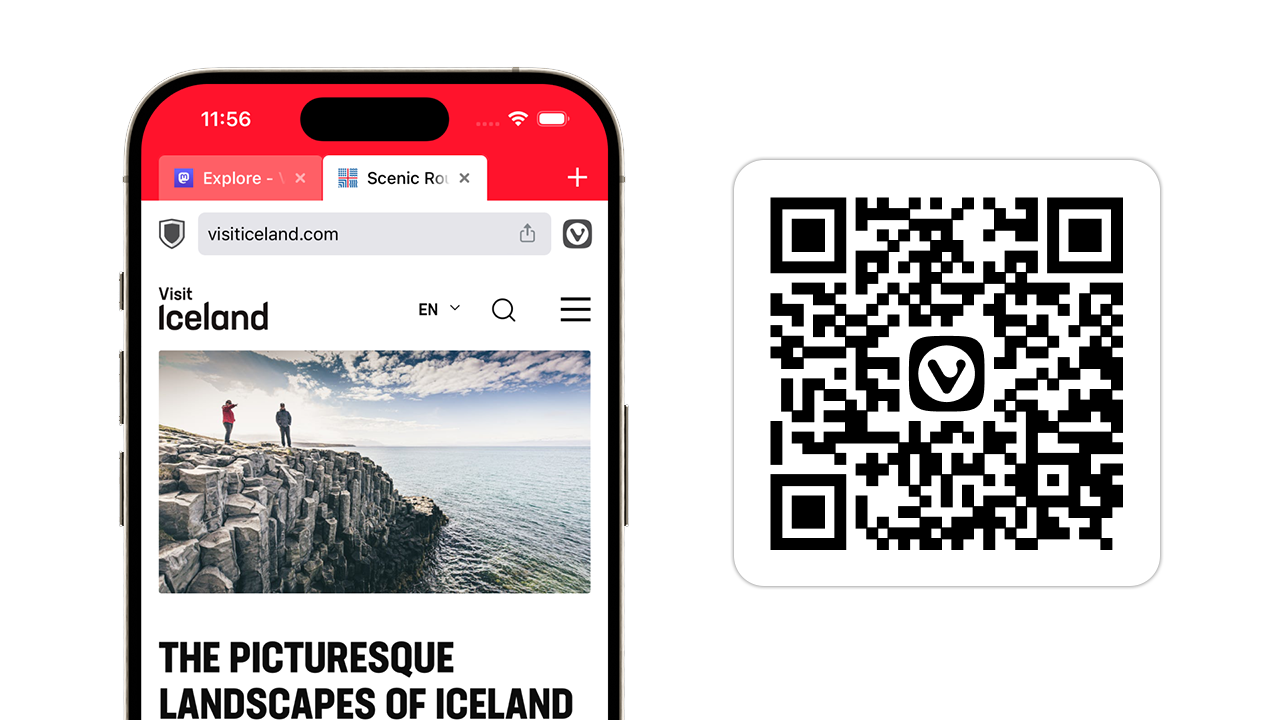

Vivaldi is your browser.

Get involved.

Do you like Vivaldi and share our company values? Get involved to support our mission and help us grow!

Become a volunteer

There are different ways for you to help, depending on how much time you want to invest and what your interests are. We’d love to have you on board!

Member spotlight

Ayespy

Hi! My name is Bruce Hamilton and I’m from Southwest desert region of the United States.

I’ve been using Vivaldi since 2015 on Windows, Linux and Android.

My top 5 Vivaldi features are:

- Vertical Tab Bar

- Configurable Toolbars

- Built-in email client – Vivaldi Mail

- Stackable Pinned Tabs

- Full-UI theming with graphic background of one’s choice (in my case the background is my desktop slideshow)

What I love about Vivaldi Community is the helpfulness, pleasant attitude, actual sense of community.

Interesting fact about me: I’m old (over 69), been a safety consultant and private investigator since 1980, and been a personal computer user and tech-friendly since 1977.

Want to be featured here? Fill out this form.

Tip of the day

Tip #476

Enable compact layout of menus if you prefer your menu items to be more tight-knit.

Vivaldia Game

Play the new Vivaldia game

Vivaldia 2 is now available to play for free on our website and on Steam.

Vivaldi Store

Gear up with our merchandise

Show your support for Vivaldi by getting a Vivaldi t-shirt, mug, water bottle, stickers and more. We deliver worldwide.

Vivaldi StoreLatest from the team

Vivaldi boosts performance with Memory Saver and auto-detects feeds with its Feed Reader

Vivaldi now reduces memory usage by automatically hibernating inactive tabs, auto-detects more feeds with its Feed Reader on websites like Reddit and GitHub, allows creating Workspaces out of tab selections with a right-click, includes a window split-view for apps on Mac, and more.

Swimming with the President of Iceland: President Guðni dives into the Vivaldi office

Find out what President Guðni Thorlacius Jóhannesson and his wife First Lady Eliza Jean Reid have in common with our Software Engineer Geir Gunnarson and why it involves the ocean.

Women in tech are here to stay

Every year we celebrate Women's Day by sharing stories about the women whose tech journey is somehow bound to Vivaldi. Get to know some of our female Ambassadors!

Featured Community blog posts

Stop Killing Games - Why Care?

Earlier this month the Stop Killing Games campaign launched. It started as a campaign around one particular game - The Crew, which was shut down by publishers. Now, I never played the crew, and don't miss it, but I…

4 days ago

By lonm

Blue balloon day

A glorious summer day theytook meto the park to play andit was oh so lively full ofpeople kids and dogsand big smiles all around Daddy definitely wasn’t richYet Mom she was good lookingI was a big-eyed kid of 5skinny…

2 weeks ago

By canardlilies

Total Solar Eclipse

There are so many infinite reasons I love Montreal, the total solar eclipse being one of them. Collective awe being another, and the waft of smoke from sausages grilling in Jeanne-Mance, on a perfect spring summer day,…

2 weeks ago

By gracema

Vivaldi on social media

Meet new and familiar community members from around the world.

See more social links